What Is Prompt Caching?

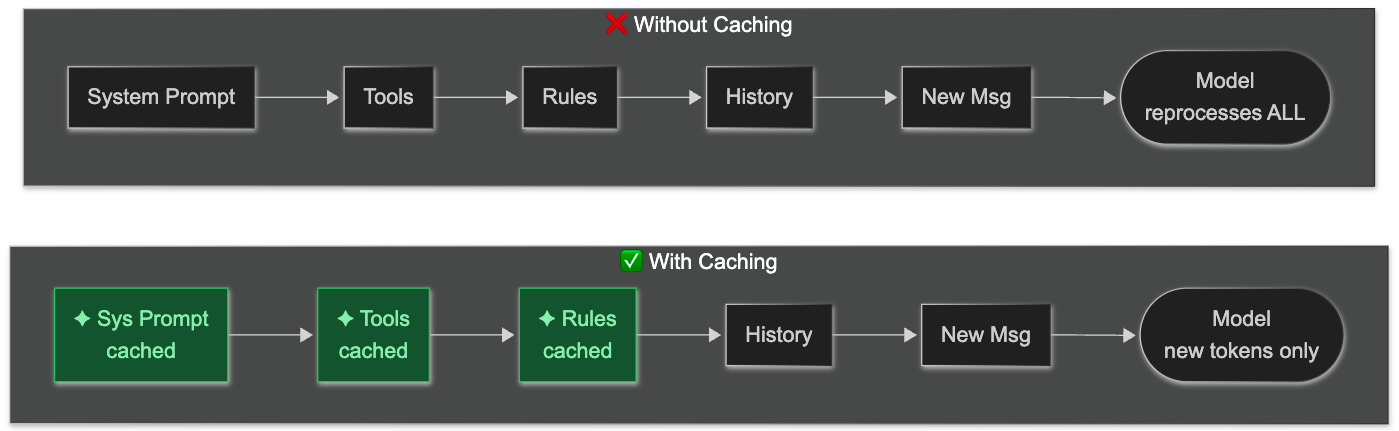

Every time you send a message to an AI coding agent, the full conversation gets re-sent to the model API — system prompt, tool definitions, project context, all previous messages, then your new one. Without caching, the model reprocesses every single token from scratch on every single turn.

Prompt caching stores the key-value computations the model already did on a stable prefix of that input. On the next request, if the prefix is identical, the model skips recomputation and reads from cache instead. Same output. Fraction of the cost.

This is not a developer-facing feature you opt into on most coding tools. It happens (or doesn't) depending on how your session is structured.

Do You Need to Care?

Two audiences, two reasons to care. Find yours below. Throughout this article, sections are tagged 👤 everyone (applies to both groups) or 🔑 BYOK (applies only to API/BYOK users).

👤 Subscription users — Copilot, Claude Code subscription

You don't pay per token, so cost isn't the immediate issue. But cache misses still affect you:

Latency. A cache miss means the model reprocesses 50,000+ tokens before responding. That's the difference between a 1-second reply and a 4-second one in a long session.

Context quality. Some agents summarize or truncate context when the window fills. Frequent cache misses accelerate context bloat and push you toward that truncation sooner.

Rate limits. Provider behavior differs. OpenAI says cached prompts still count toward rate limits; Anthropic says cache hits are not deducted against rate limits — so on Claude, keeping cache hits high directly extends how much you can do per window.

🔑 BYOK / API users — paying per token

The cost impact is direct and large:

Anthropic: Cached reads cost 10% of normal input price. Cache writes carry a 25% surcharge (5-min TTL) or 2x (1-hour TTL). Net savings: 80–90% on input costs when hitting consistently.

OpenAI: Cached input pricing is model-dependent; many current GPT-5-family models list cached input at 10% of normal input. No write surcharge. Automatic on supported requests over 1,024 tokens.

In long Claude sessions, cache hit rate is one of the biggest cost levers you have — bigger than model choice in many cases.

The rule that applies to both

The cache works by exact prefix matching. Every byte in the prefix must be identical — a single changed character causes a full cache miss. This is why habits like adding an MCP server mid-session, switching models, or editing a rules file silently break it. No warning. Just a cold restart, slower responses, and (if you're paying per token) a higher bill.

How It Works

Request order matters. Coding agents often structure requests roughly as:

system prompt → tool definitions → project instructions/context → conversation history → current message

Static content goes first. The cache checkpoints after the stable prefix. Everything after the checkpoint — your new message — is always processed fresh. The longer the stable prefix, the more you save.

Default cache TTL: 5 minutes (Anthropic), 5–10 minutes (OpenAI). A coffee break means a cold restart. OpenAI offers 24-hour extended retention on GPT-5.1; Anthropic offers a 1-hour option.

Provider | Cached read cost | Write surcharge | Default TTL |

|---|---|---|---|

Anthropic (Claude) | 10% of normal | +25% on write | 5 min |

OpenAI (GPT) | model-dependent; often 10% on current GPT-5 models | none | 5–10 min |

What Breaks the Cache (All Agents)

These habits invalidate the cache prefix regardless of which coding agent you use. Avoid them.

Action | Effect on cache | Applies to |

|---|---|---|

Add/remove tools or MCP servers mid-session | Full reset — tool definitions are in the prefix | Claude Code, Copilot |

Switch models mid-session | Full reset — caches are model-specific | Claude Code |

Edit your rules file during a session | Cache miss on all subsequent turns | All (CLAUDE.md, copilot-instructions.md) |

Dynamic values in rules/system prompt | 0% hit rate — every turn looks "new" | All |

Long pause (> 5 min for Anthropic) | Cache expired, cold restart on next turn | All |

Sprawling, unfocused threads | Context fills, triggers summarization/reset | All |

Universal rule: The cache works on byte-identical prefixes. Static content first, dynamic content last, no edits mid-session.

Keep Your Rules File Stable (All Agents)

Every coding agent has a project rules file that becomes part of the cached prefix:

Agent | Rules file |

|---|---|

Claude Code |

|

GitHub Copilot |

|

Two rules apply to both:

1. No dynamic values — timestamps, usernames, session IDs, "last updated" lines all break caching:

# ❌ Bad — any of these break the cache

Updated: 2026-05-16

Last edited by: @username

Session: {{uuid}}

# ✅ Good — static project conventions only

Project: my-api

Stack: Next.js 16, Supabase, TypeScript

Always use TypeScript strict mode.

API routes return NextResponse with typed payloads.

2. Keep it small — these files are sent on every turn. Claude Code warns above 40k characters. A bloated rules file costs tokens even on cache hits and crowds out conversation history. Trim aggressively; move detailed reference docs to separate files the agent can read on demand.

Agent-Specific Settings

How to check cache performance and configure each agent.

Claude Code

Check cache performance:

In-session, type /cost to see cumulative spending and cache read/write token counts (same as Settings → Usage tab).

🔑 BYOK only: The raw API response includes cache fields:

{

"input_tokens": 1200,

"cache_creation_input_tokens": 44100, // tokens written (5m: 1.25x, 1h: 2x)

"cache_read_input_tokens": 44100, // tokens read (costs 10%)

"output_tokens": 312

}

If cache_read_input_tokens is near zero after turn 2+, something is breaking the prefix.

Enable the cache-stability flag (especially for teams):

# Print mode (-p): scripted / non-interactive runs

claude -p --exclude-dynamic-system-prompt-sections "Summarize the PR changes"

# Interactive sessions

claude --exclude-dynamic-system-prompt-sections

This moves per-machine sections (cwd, env info, git status) out of the system prompt into the first user message — making the prefix identical across users. Only applies with the default system prompt (ignored when --system-prompt is set).

Configure MCP servers up front in .claude/settings.json:

{

"model": "claude-opus-4-7",

"mcpServers": {

"filesystem": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-filesystem", "."]

}

}

}

Adding/removing MCP servers mid-session resets the cache.

GitHub Copilot

Copilot doesn't expose cache controls or hit metrics. The only lever is keeping context stable.

Verify your instructions file is being used — in .vscode/settings.json:

{

"github.copilot.chat.codeGeneration.useInstructionFiles": true

}

Then ask Copilot Chat: "what are your instructions?" — the answer should reflect your .github/copilot-instructions.md.

Cache behavior is fully managed by GitHub. Latency is your only signal — consistent fast responses = cache working.